Supercomputing -- Catching The Last Wave

I had been waiting for the 11/06 Top 500 figures for Supercomputers. I had been expecting AMD to do well and this is what the figures show. However, the figures also show a worse situation for both Intel and IBM than I had expected. Since 2003 we've seen cycles of replacement in HPC but I think X86-64 will be the final cycle. And, the clear leader of that cycle is AMD.

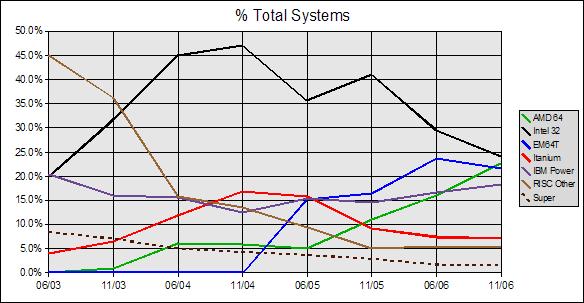

We can see that traditional supercomputing architectures from Cray, NEC, Hitachi, and Fujitsu have been on a slow but steady decline since 2003 as shown with the dashed line marked "Super". We can also see that the once dominant RISC systems of Alpha, Pa-RISC, MIPS, and SPARC have plummeted during the same time frame as shown with the brown line marked "RISC Other". This is to distinguish the other RISC's from IBM's Power series. We can see the rise and fall of Itanium. Power seems to be a shallower mirror image of Itanium with a smaller dip and then a rise. However, the real story is the trend for Intel X86.

It is clear that the Intel 32 bit systems of the old workhorse P4 Xeon swept in to replace the vanishing RISC architectures. It is common sense that when Intel 32 began to fall in 06/05 that Intel 64 would rise to replace it. And, by the graph it is clear that Intel 64 systems shot up at an incredible rate. However, 11/05 doesn't follow common sense at all. Intel 32 systems should still be falling and Intel 64 should be rising, but we don't see that. It can't be a general trend because we see that AMD 64 is moving up nicely. What appears to have happened is that having sampled the Intel 64 systems in 06/05 the market somewhat recoiled and fell back on the older Intel 32 bit systems. Again, it appears that the market sampled Intel 64 in 06/06 but once again found it lacking in some fashion and this shows as a decline in 11/06. The most likely culprit is the high power draw of the Prescott based Intel 64 systems.

With AMD the picture is quite different. In 06/04 the older RISC based systems were falling rapidly. This share was picked up mostly by Intel 32 and AMD 64 with Itanium taking share from traditional supercomputing systems and IBM's Power. Six months later, AMD is flat while Intel 32 continues to absorb share from the still falling RISC systems and Itanium continues to take Power share. Six months later in 06/05 AMD is still flat as Intel 64 takes off like a bullet taking share from the falling Intel 32 and RISC systems. Power appears to have reversed the trend and is taking back share from Itanium. Just six months later this all changes. In 11/05 Intel 64 has lost momentum and Itanium plummets even as RISC and traditional SC systems continue to decline.

Clearly when we look at the last 18 months of supercomputing AMD 64 stands out as the leader of the pack. Intel 32 has peaked and is falling; there are no indications of a revival from traditional SC, RISC, or Itanium. This only leaves Power, Intel 64, and AMD 64 to take up the slack from the fading Intel 32 systems. For the past 18 months AMD has risen steadily. Intel had appeared to be a contender but the fall in the last six months along with the earlier slowdown casts serious doubt on this. AMD's position is also bolstered looking forward by three announced large scale HPC wins while there have been no similar wins for Intel. With AMD's dominance in 4-way systems and its one year lead with Torrenza over Intel's Geneseo initiative it doesn't appear that this trend will change anytime soon. Intel won't have a real quad system for its C2D architecture until it releases a new chipset in late 2007. However, this chipset won't be suitable for HPC. This will put Intel at a disadvantage until perhaps mid 2008 to early 2009. However, AMD could be the dominant player in HPC long before then. AMD could top 40% by 2008 duplicating Intel's once strong position with Intel 32.

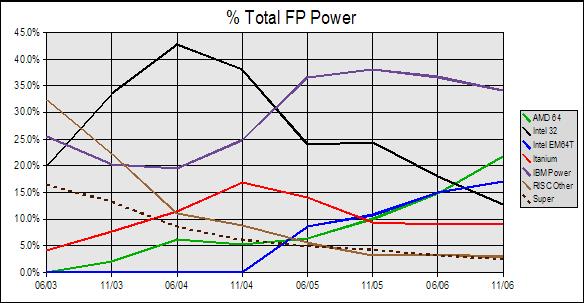

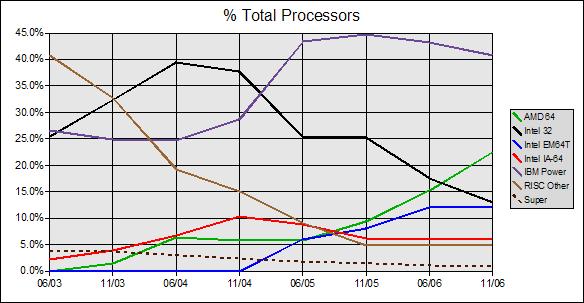

The cycle of waves is even easier to see if we look at the graphs for total power and total processors.

With the % of Total HPC power we can see that the first wave to replace the older RISC systems was Intel 32. The second wave to replace Intel 32 appeared at first to be both IBM's Power and Intel's Itanium however now it is clear that Itanium peaked in 11/04 and the second wave was Power alone. Now too it is clear that Power peaked in 11/05 after never having reached quite the performance dominance of Intel 32 and has been declining for the past year. There doesn't seem to be much hope for a resurgence of Power based HPC since IBM has been using lightweight embedded derivatives of Power for Blue Gene which are not as good for general computing. IBM does have Cell but Cell is even worse for general computing than the embedded derivatives. This seems to be the reason why IBM is pairing Cell with Opteron. The % power graph also shows a much closer race between Intel 64 and AMD 64 with AMD pulling strongly ahead in the last six months. Also, the second graph tells a different story in terms of Intel's position with 64 bit systems. Although there have been more numbers of systems of Intel 64 apparently these were more smalller systems as the total performance is much closer.

The graph for numbers of processors is similar to the power graph.

However, one difference we can see is that in terms of numbers of processors used in HPC AMD was never behind Intel. They reached rough parity in 06/05 but AMD has been in the lead ever since. Both graphs suggest that within a year AMD will have surpassed Power both in terms of total performance and in terms of numbers of processors. This would appear to be good news for both Cray and Sun with mixed news for IBM. As IBM loses share with Power they do stand to gain with AMD HPC systems. However, Intel clearly has to do something if it plans to avoid being third in HPC behind both AMD and IBM. In terms of processors used for HPC Itanium's numbers are only slightly higher than the old RISC systems which is pretty dismal to say the least while Intel's 64 bit numbers are just a little over half (54%) of AMD's. By almost any standard Intel show's no current hope of being able to regain dominance in HPC. This seems to be a serious issue for Itanium as its market gets pinched ever smaller. Opteron has already driven Itanium out of the 4-way market and it appears that Opteron is also keeping Itanium out of the HPC market. It does not seem realistic for Itanium to survive in the sliver of the server market from 8-way to low end HPC. This also brings up the question of whether Intel is deliberately crippling Woodcrest in 4-way to keep it from rolling over Itanium. For Intel to turn things around it will take far more than they current have and more than they have announced for the next year. However, whether the delay with 4-way is deliberate or just due to poor engineering, AMD is not waiting. Nor does it appear that AMD is waiting to become the final wave in HPC.

That X86-64 will be the last wave seems almost a certainty. There is no chance now for the older RISC systems to make a comeback and there are only two of the traditional supercomputer makers left: Cray and NEC. Itanium does not appear to be capable of challenging the current systems and is likely to fade further in the coming years. While Cray and NEC are working hard it is most likely that they will simply take back some small share that they have lost since 2003. The only real player left is IBM with Power. However IBM's use of lightweight Power derivatives which are only 32 bit and have limited memory addressing in Blue Gene suggests that this architecture too is unsuitable. This is further bolstered by Cell which is even more constrained than the lightweight derivatives. IBM is rapidly becoming a co-processor player rather than a main processor supplier for HPC. In fact, IBM may have to work hard to avoid being completely marginalized by GPU processing systems.

Intel should continue to share in the rise of X86-64 but the lack of announced large scale HPC systems is disturbing. Similarly disturbing is Intel's trend of doubling FSB's on its Northbridge chips. Essentially, the Intel Northbridge is like a mythical Hydra which survives by growing back two FSB's to replace the old one. Intel seems to be getting by with two FSB's now. However, there is some doubt that Intel can deliver quad FSB systems at a competitive price and it will be nearly impossible for Intel to move any higher. This leaves Intel once again facing a deadend while AMD simply beefs up its existing structure. If Intel doesn't do something quickly it will end up giving away nearly all of the top share of servers all the way from 4-way to large scale HPC to AMD. X86-64 seems to be the final wave in HPC with AMD in the lead now and most likely into 2008.

19 comments:

Thank you. I suppose with IBM the first question that comes to mind is why they didn't match Cell with one of their own Power based processors. From what I can tell, they have only used lightweight Power processors in the Blue Gene systems. These processors are embedded derivative cpu's with extra FP units added. Apparently, these would not have added enough in terms of general computing while the much beefier regular Power processors aren't efficient enough for large scale systems.

We should see several variations of this hybrid theme. For example, it appears that Cray will match their very powerful vector processors with Opteron for best all around performance. There is also the suggestion of hybrid massive threaded processors with Opteron. We should see systems with co-processors and there is always the potential for GPU based processing by 2008.

In all honesty I had been expecting the announcement of a large scale HPC system based on Woodcrest but no such system has been announced. I don't really know the reason for this. It could be that large systems are being planned but just haven't been announced yet. Or it could be that system makers have lost confidence in Intel after suffering through Prescott. The only other reason I can think of is that Intel systems have a very poor upgrade record while AMD just completely the overhaul of an older Opteron based HPC system with newer X2 Opterons.

It's way over my head, but nevertheless, terrific blog Scientia.

This was a great psot and reflects what I have long said. AMD is concetntrating on the HPC market and that's why they are in no hurry to release Barcelona.

They, as you noted, have owned the 4Way space in Transactions and with Torrenza right around the corner (I think the "Stream Processor" will ship with Barcelona) AMD will totally destroy the high end.

L3 will mean they can expand to 16/32 sockets with ease which will more than likely take out SuperDome.

Even with the current infrastructure Stream will catapult scientific research in addition to 8xxx. I expect there to be PCIe, HTX and cHT versions for different requirements.

Power 6 though will definitely keep IBM competitive as it will be twice as fast as Power 5.

Opteron is just doing so well because 8xxx is less than $3000 but packs a serious punch.

I can't wait to see the first numbers for Barcelona. It should get 60-70% over Opteron, maybe more, since they are adding SSE4A.

It seems like just ading the two cores would give them 60%.

But I digress, good post. Keep it up.

Power 6 though will definitely keep IBM competitive as it will be twice as fast as Power 5.

This will keep Power competitive in servers but only in the low end of HPC (like Itanium). IBM has no announcements for a large scale system with Power. However, IBM has announced large scale systems with Opteron and Cell. It will take longer for Power to flounder because IBM will use it preferentially in both its servers and mainframes. However, eventually even Power will be pushed aside by X86.

This will keep Power competitive in servers but only in the low end of HPC (like Itanium). IBM has no announcements for a large scale system with Power. However, IBM has announced large scale systems with Opteron and Cell. It will take longer for Power to flounder because IBM will use it preferentially in both its servers and mainframes. However, eventually even Power will be pushed aside by X86.

I also believe that X64 will be the wave of the future, but clusters will still be made with Power. It was said that IBM may make some of their chips 1207 compatible along with Sun.

That kind of thing will push other RISC arch's to the back burner in the next two years.

HTX is definitely a nail in the RISC coffin, since no one else uses it fully for interconnects.

HT3 will kill everything.

Slowly but steadily as RAS features are added to X86 these processors will become more and more suitable for traditional mainframe architectures. With the greater volume of X86 the margins will be reduced and this will ultimately make it too expensive to continue development of non-X86 processors. Itanium will be pushed out before Power because it does not have a dedicated mainframe market.

Insightful post Scientia.

I was a bit sick of listening all the yapping about buying INTC with my bankers. They keep saying buy it cause it's undervalued and it(Intel) could go back up to the former highs in 3Q-4Q's.

The problem is today's bankers don't do much real research anymore. All they do is checking high and low's of ones stock without knowing the technological advantages(disadvantages) of the company. All they do is copy-paste what someone from somewhere said.

I just read something interesting in the Doc's post. Something about Hyper Transport and Coherent Hyper Transport. Can you help enlightened us a little?

Your unbiased insights are very much appreciated. ^^

I highly doubt that since x86 is one of the worst instruction sets for HPC

Then you apparently aren't familiar with HPC today. There are only two of the heavy duty supercomputing processor architectures left: Cray and NEC. So far, these two are still slipping. In contrast, Blue Gene L is at the top of the pile and its processor is based on a lightweight embedded design that is only 32 bits.

The only thing I can think of is that your statement is based on a naiive lumping together of Cell, the Blue Gene processor and Power. Power is a true heavy server and mainframe processor but isn't in the same class as Cray or NEC for HPC. The Blue Gene processor would be like Geode with extra SSE units. This processor is not a heavy server processor.

All current x86 CPUs also have way too much circuity in it for speeding up single threaded applications.

As opposed to Sun's new chip? How many supercomputers are currently planned that use it?

Btw, did you know that single SPE in Cell has roughly 85-90% SP FP performance of K8 at the same clocks?

And, did you know that for general purpose processing Cell is perhaps 1/10th as fast at the same clock using all of the units? Cell is not suitable to be the main processor in any HPC system; it is too specialized. There are problems today that will not run on Blue Gene because of the processor limitations. Cell is even worse in this regard; there are a lot more problems that could not be run in a pure Cell architecture. There will be a convergence in this area but the architecture will not ressemble Cell.

I just read something interesting in the Doc's post. Something about Hyper Transport and Coherent Hyper Transport. Can you help enlightened us a little?

I'm not sure which thread you are referring to. Could you be more specific? I could explain ccHT but I don't want to cover what you might already know. For example, you might already know that HT separates memory access from I/O and that ccHT is necessary for 2-way (dual socket) and higher with AMD processors.

Ho, Ho. The question of program efficiency on HPC systems has been around for more than 20 years. If you are truly suggesting that you don't think that there are programs that are not efficient on various HPC architectures then you have proven a staggering lack of knowledge on your part. The problem is far from limited to parallelization.

Secondly, for you to suggest that Cell is not highly specialized is again a stunning statement. Cell has serious limitations in code and data size as well as communications bandwidth. The one and only thing it is capable of doing well is parallel FP processing on lightweight problems. To suggest that you can just use a lot of these is beyond silly. Name even one IBM server or mainframe system that uses Cell as a main processor.

I realize that this area has been muddied quite a bit by such things as Intel's overly optimistic talk about its Teraflop board. This design however will be obsolete by 2009, long before it could actually be introduced.

Scientia: Could you be more specific?

Paul & Craig

hammerfall_prophet added very interesting comment. I have only begun to realize the potential of ccHT and that hypothetic conversation informed me that even if Intel go with H consortium , they still can't compete with AMD.

My knowledge on ccHT are limited on the comment that hammerfall made(but what a ingenious post!).

I suspected that lot of people may not know the full potential of ccHT therefore questioned AMD's architecture and scalability.

Can you tell us how ccHT transformed HPC and are there any companies(other than AMD) that use ccHT?

So, you are talking about a 1 year old parody post on Sharikou's blog.

ccHT is like a network for processors. In the same way that computers can share harddrives over an ethernet network, ccHT allows processors to share each other's memory space.

Non cache coherent HyperTransport is used to connect to I/O. This works in a similar fashion to the way that peripherals can be hooked to a computer with USB.

I wouldn't exactly say that ccHT has revolutionized HPC. The key is being able to join multiple memory spaces into a single larger space. IBM has been able to do this without ccHT. So, it isn't clear yet that ccHT is better. However, it is possible that Cray and Sun could make a comeback in HPC using ccHT. There is some suggestion that this could happen in 2007 and 2008. We'll just have to wait and see.

Some of the information in that article was incorrect. AMD's ccHT is based on the technology for the DEC Alpha bus. Intel would have access to this although it would be slowed down because AMD absorbed the entire Alpha team that worked on it.

Intel's biggest problem is that the Alpha bus dates back to 2001 and was less than HT 1.0. AMD is ready to release HT 3.0. What Intel is actually working on is CSI which is like an upgrade of PCI-e. Unfortunately, CSI won't be ready until 2009 and is not as good as HT 3.0 which will be out in 2007.

An memory controller on the processor die (IMC) would not be that difficult for Intel since they already have these on their Northbridge chips. The biggest problem here is not the IMC but the fact that it would require a new socket. Intel won't scrap 771/775 until it has the new socket ready that Xeon will share with Itanium. This won't happen until late 2008/early 2009. It will probably happen for Itanium first in late 2008 but not for Xeon until 2009.

What Intel is actually working on is CSI which is like an upgrade of PCI-e.

I thought that was Geneseo?

Unfortunately, I hopeCSI won't be ready until 2009 and I hopeis not as good as HT 3.0 which will be out in 2007.

I could go on and on, but I think you declare unknowns as fact rather matter of factly:/

Well, Red that seems like an odd statement. Everything that I say is unknown until there is an official announcement. Intel was supposed to have CSI ready in 2007 but that will not happen. So, Intel fans are now hoping for early 2008.

The problem with the 2008 idea is pretty much everything. CSI needs an onboard memory controller and a new socket. The timeline I've seen for the new socket is early 2009. There is nothing to indicate new motherboards and chips with a new socket in early 2008. Geneseo is not CSI. Geneseo is an upgrade to the PCI-e spec that would bring it closer to where Intel needs to be for CSI. Remember, PCI-e exists today but CSI only exists as an idea. The Geneseo upgrade would get the PCI-e hardware to about what Intel needs for CSI. Think of it this way, Geneseo is Intel's belated attempt to compete with Torrenza but at 1-2 years behind.

CSI is not as good as HT 3.0. CSI has higher latency and is missing split mode, power saving mode, distance mode, and hot connect.

Everything that Intel did at IDF suggests that CSI will not be released before 2009. The groundwork and hardware for Geneseo should be getting in place after mid 2008. This builds a good foundation for CSI in 2009.

So, Intel fans are now hoping for early 2008.

Oh boy:D So what does it add to your argument? I don't need to go around saying Quad FX sucks, you AMD fanbois were wrong, I can just say Quad FX sucks:)

http://www.theregister.co.uk/2006/09/21/intel_open_chips/

http://www.leschamb.com/free/doc031302--Free-Spreadsheet-Downloads.html

Click "Microsoft enterprise spreadsheet to be killer app for personal supercomputers" of 2nd link.. Direct link doesn't work? They're talking about CSI now.

I think they will also have a point-to-point bus in the near future.

Ash, if 2009 is a hopeful date, implying 2010+, I wonder what Chang considers the near future.

Scientia, when I reference obscure things, I at least back it up with a link. I have no idea where you are getting your numbers from..for CSI performance/features, socket date:) And I don't have a problem with you thinking that it'll be 2009 but the way you say it almost sounds like you're passing it off as fact.

Chang is very, very vague on his comments. For example, he says, "HyperTransport 3.0, which is expected to come out in one or two years".

The actual date is less than one year. If Chang doesn't know when HT 3.0 will be released then what significance would his "in the near future" comment have for Intel?

Red, if you have a source that says that CSI and the new socket will be out in 2008 then by all means post it.

~6 months earliest possibilty of Barcelona, still in 2007, '1' year away from now. Perhaps he's using the same logic that you're using that CSI will come Q408/Q109, therefore, CSI will come in '3' years. You specify that some random fanboi declares Nehalem in H108, how about telling us what half of 09:)

Scientia, you provided the ubsubstantiated claims of CSI performance/features, socket date.

http://www.vr-zone.com/?i=4182

Perhaps they are not to your liking, but at least I actually provided a source. Where's yours?

Thanks Scientia. Real insight are difficult to find and having someone willing to share it impartially is even more precious.

Thank you and keep up the good work. No need to concern your self too much for people who have ADD. ^^

Quad FX sucks period. Performance, wattage, overclockability. The only real world test where I see Quad FX ahead is..

http://www.hwupgrade.com/articles/cpu/10/vegas_1_vista.png

And the Q6600 is pretty much on par/better than it everywhere else.

http://blogs.zdnet.com/Ou/?p=382&page=2

Judging by comments by AMD, it seems even they realize how much of a dud it is.

I spoke with AMD today by phone to ask them about the poor performance results...and they tried to direct my attention to the platform. AMD points out that this will usher in a new era of dual socket desktop PC computing though companies like Dell and Apple have been selling dual socket Intel PCs for months. AMD points out that this is for the desktop market rather than the workstation or server market though I still don't understand what difference it makes other than the name we give it.

I don't know what 'irreleveant' benches you're seeing. And there is no amount of tweaking to justify double the wattage, even if Quad FX could be potentially 5% ahead in general. There are benches on [H] and TechReport where Quad FX is more favorable, but still a dud. But considering that QX6700 is running for $1700 on Newegg, FX74 isn't that bad.. Except that Dailytech claims Q107 availability for Quad FX, and at that time, Q6600 which is on par/cheaper with FX74 will arrive.

Oh yeah, a bunch of jabber about 'correlation'. I remember how passionate you were about QX6700 being 'just' 30% better for DivX. Now Quad FX is ~similar, but the tests are irrelevant because it doesn't achieve your 95% scaling?

Your power argument that 2 QX6700s is on par with 2 K8Ls is silly. Somehow I feel like Intel is not going to stoop to dual socket ridiculousness to compete in something that they don't need to.

Intel's server version is here..? Tigerton will be essentially Clovertowns, not doubling Tulsas. Tulsas are 95W/150W, Clovertown are 80W/120W.

Post a Comment